Expert

A Key Tool for Intellect Solutions

Expert is the key desktop tool for experts. Expert is used to configure data virtualization, data handling including logic, math, data pre-processing, cleansing, converting, event detection, perform predictive and cluster modeling, create state detectors, and on-line reporting tasks. Save your solutions to disk and later use Designer to put them on-line in the Intellect Server.

Configure Data Virtualization

Intellect has the concept of variables and tasks within hierarchical structure of contexts. A context is an element of your operations. A top-level context might be your company, then within that locations (a plant, an oil and gas platform), and within a location you may have one or more production lines, wells or other unit operations. By defining contexts, you can select a context and map in variables that belong specifically to that context. A plant may have common utilities, for example, or a platform may have production separators, while a unit operation may have various pressures, temperatures and flows, etc. By organizing your variables in this way they are easy to find and interpret and understand their roles. You will find such logical structures in OPC "Groups" and in the ISA 95 standard.

When mapping in variables, you select a context and then access the data source, such as OSISoft PI, or Oracle, or others that are supported. The information you use to log in and query the source is remembered. This is the data virtualization aspect. Later, when you select a variable from the context tree, Intellect knows where it is, and how to get it.

Additionally you can set properties of these variables such as giving them a meaningful name and a meaning. On Well A, you may have a half dozen variables that have identical meaning to variables on Wells B, C, D, E, ... By setting meanings, you can select another context, right-click and switch to the other context's variables.

All this information is stored in the Intellect database for future use, either by you, or by Intellect itself while operating.

Create Data Handling Solutions

Once you have data virtualization established for the variables you are working with so far, you can access any number of them simultaneously by selecting them from the context tree. Intellect knows where they are, extracts the data typically between a start and ending time period, and synchronizes them into a unified working set. You can then perform a myriad of functions upon them, coming up with all sorts of interesting and useful data handling schemes. These schemes can be put on-line or used to build models, state detectors, reporting and more. There are about 150 different functions you can apply in any order, in any amount, resulting in near infinite possibilities.

Build Predictive Regression Models

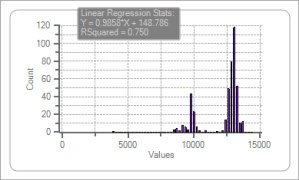

Expert provides the capability to build multivariate non-linear/linear predictive regression models. The modeling technology is unique in that it searches through combinations of alternative inputs and model types and structures to maximize the predictive accuracy of your results. The modeling engine builds M models selecting a subset that are the best and puts them into an "ensemble" which are merged into a final output(s). This committee of models improves robustness and smoothness without any time lag. You can document your models in an extensive report, including inputs used, their importance, charts and graphs, error and accuracy histograms, produced in Microsoft Word, all with a click of a button.

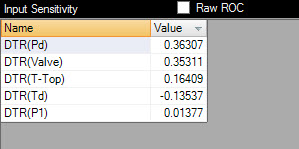

Identify Key Drivers of Performance

Expert automatically analyzes input sensitivities, the partial derivative of performance with respect to each input, and ranks these into a list of key drivers of your performance. You can use this information to understand which variables are important, which are not and determine those factors that are causes to problems.

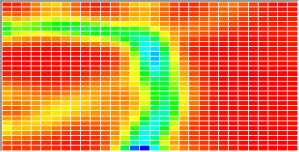

Build Clustering Models

Expert also enables you to build cluster models, "Self-Organizing Maps" which can be used to group similar data for data reduction or for identifying states purely driven by similar operating conditions. You can build cluster maps then use a slider to group the data into clusters then export it to disk with the cluster IDs assigned. Data can be reduced turning bigger datasets into smaller ones and balancing the frequencies of data points.

Build State Detectors

When a process is operating in various states it generates "multi-modal" data, that is, numerous distributions of process or product characteristics. By using a novel detection algorithm we call "draining the pool", these states can be identified by detecting and characterizing the numerous peaks. These state detectors can be put on line to know when certain process or product behaviors are happening which then can be used to drive other logic in the Intellect system. In some cases customers use this information to decide what grade (and brand) product they are producing based product performance.

Build On-Line Reporting Plans

You can create comparative reports that will automatically be issued autonomously on-line under certain conditions. Perhaps Intellect is monitoring the state of a process, detects and event start, then end, then fire off a report of model performance vs. test results for example.

"The Lego Blocks of Process Optimization"

~ M.T., Kuala Lumpur, Malaysia

"Very Clever"

~ R.H., Sunbury England

"This will be my legacy"

~ E.Z., Houston, TX

Send an email to tech@intellidynamics.net

Advantages and Benefits

- Define data sources just once, access variables and tags there forward with no effort or concerns

- Data virtualization works across all products and used autonomously by the Intellect Server

- Be able to relate data on one operation to another

- Access and syncronize your data into a unified data set

- Clean and filter your data so you are using valid, high quality data

- Pre-process your data into near infinite possiblities

- Build models, visualize and understand key drivers of your performance

- Detect process and product states

- Cluster your data to reduce "Big Data" into "Small, Potent Data"

- Build autonomous reporting solutions

Functionals

- Simultaneous data access to files, workbooks, relational databases, OSISoft PI and other historians

- Data synchronization into unified data sets regardless of where the data came from

- Data preprocessing using a wide array of functions with billions of permutations

- Numerous math functions: Change, SQRT, ln, LOG, ...

- Numerous smoothing functions: SMA, AMA, XMA, LWMA, ...

- Numerous trig functions: Sine, Cosine, Tangent, ArcSine, ArcCosine, ArcTangent, ...

- Walking windowed: Z score, Slope, Mean, Median, Min, Max, ...

- Binary 0-1 or -1 to +1 based on thresholds for probability estimation or conditional logic.

- Clipping filters (keep inside or outside of limits)

- Date-Time Regularizer (puts the data onto a consistent interval, usually by averaging in time “buckets”

- ADOExpressions (SQL calculation language)

- Totalizer to Rate

- Pull Forward to fill gaps

- Many, many more rare and useful, all with a click or two

- Lead/Lag correlation analysis and plots to discover time lags

- Ability to set candidate inputs as Optional, Mandatory, Ignored.

- Ability to set constraint on directional effect of inputs (1st Principles enforcement)

- Genetic Algorithm input and model type and structure search seeking best performing models

- Numerous model types; classifiers, regressors, clustering & mapping

- Ensembles (committees) of unlimited models averaged for estimate robustness

- Multiple model performance metrics, ability to sort and remove models

- Triple data set validation with sequential or random sampling

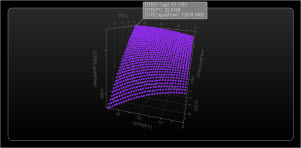

- 3D Response surfaces of model outputs as selected inputs vary

- Multiple means to limit the number of inputs used to focus on key drivers

- Sensitivity analysis with normalized ranking to know key drivers

- Out of Sample Statistics and Chart

- Data Visualization

- Trend plots, by row or time

- Histograms

- Scatter plots

- 3D response curves and surfaces

- Trends and performance histograms of predicted vs. desired

- Scatter plots of predicted vs. desired and error vs. any input

- Microsoft Word modeling reports

- Self Organizing Maps with cluster analysis and heat maps

Technicals

- Runs on Vista, Windows 7, Windows 8 and 8.1, Server and Desktop

- Operates with local or remote Intellect Servers on your network

- Memory depends on quantities of data. 4 GB minimum, 8 recommended, more is beneficial if using 64 bit version

- Multi-Core

- 32 or 64 bit available